Overview

Welcome to the essential guide to ETL (Extract, Transform, Load) tools for R, a programming language that is increasingly becoming the lingua franca for data analysis and statistical computing. ETL processes are pivotal for companies looking to harness data from diverse sources, enhance data quality, and accelerate the journey from raw data to actionable insights. Particularly when dealing with R programming language data, ETL tools streamline the workflow into a spreadsheet, ensuring that data is not only more accessible but also primed for the complex analysis that R is known for. On this page, we delve into the intricacies of the R programming language, explore a variety of ETL tools tailored for R data workflows, and elucidate use cases that demonstrate the practicality and limitations of using R for ETL tasks. Additionally, we introduce Sourcetable as an alternative to traditional ETL for R language, offering a seamless experience for those seeking to integrate and analyze their data efficiently. Stay tuned for a detailed Q&A section that aims to address common inquiries regarding ETL processes with R programming language data, ensuring you're well-equipped to harness the full potential of your data.

What is R Programming Language?

R is a language and environment for statistical computing and graphics, which serves as an implementation of the S language. Developed at Bell Laboratories by John Chambers and colleagues, it is part of the GNU project and extends the S language's capabilities. As a statistics system and an environment for implementing statistical techniques, R is highly useful for tasks such as linear and nonlinear modeling, classical statistical tests, time-series analysis, classification, and clustering.

The R programming language is open source, and was created in 1995 for predictive analytics and data visualization. It is especially popular in academic settings due to its robustness, comprehensive features, and the fact that it is free. Its development was initiated by Ross Ihaka and Robert Gentleman, and the name 'R' derives from the first letters of their names.

As a versatile tool in the field of data analysis, R is used for a wide array of applications including machine learning, deep learning, and data science. It supports distributed computing and is platform-independent, available on Windows, Linux, and macOS. Despite being slower than some other languages like Python and MATLAB and having a high memory consumption, R remains a top choice for data analysts, research programmers, and quantitative analysts, and is employed by major tech companies such as Google, Facebook, and Twitter.

R's extensive capabilities are further enhanced through a rich ecosystem of packages, which can be accessed via the Comprehensive R Archive Network (CRAN), housing over 10,000 packages. This allows users to perform complex data analyses and create objects, functions, and packages tailored to their specific needs. R's integration capabilities with other languages and statistical packages make it a flexible tool in a programmer's arsenal.

ETL Tools for R Programming Language

The R Project provides a robust environment tailored for statistical computing and graphic design, with capabilities extending to the development of applications for Extract, Transform, and Load (ETL) tasks. Among the suite of ETL tools compatible with R, Apache Spark stands out as an open-source resource that enhances performance by executing computations in parallel, boasting speeds up to 100 times faster than Hadoop. This makes it a prime choice for R-based ETL operations.

Pentaho Kettle emerges as another significant open-source ETL tool, leveraging Pentaho’s metadata-based integration method and featuring an R script executor, thereby facilitating seamless data integration tasks within the R programming environment. On the other hand, the R package beanumber/ETL is tailored for handling medium-sized data and is optimized for SQL database outputs, making it a practical option for specific data integration needs. Furthermore, uptasticsearch is an R ETL tool specifically designed for transferring data from Elasticsearch to R, though it is noted to have less documentation compared to similar tools such as elastic.

For handling large datasets, jwijffels/ETLUtils provides a collection of utilities that enable the uploading and transmission of big data from databases, including MySQL, Oracle, PostgreSQL, and Apache Hive, to CRAN’s R-based ff packages. RETL marks its place as an emerging ETL tool in the R ecosystem, while Panoply and Blendo, both paid services, offer straightforward ODBC connections to R Studio and simplified data extraction from various sources, respectively. Stitch rounds out the options as a self-service ETL tool well-suited for developer use.

Optimize Your ETL Process with Sourcetable from R

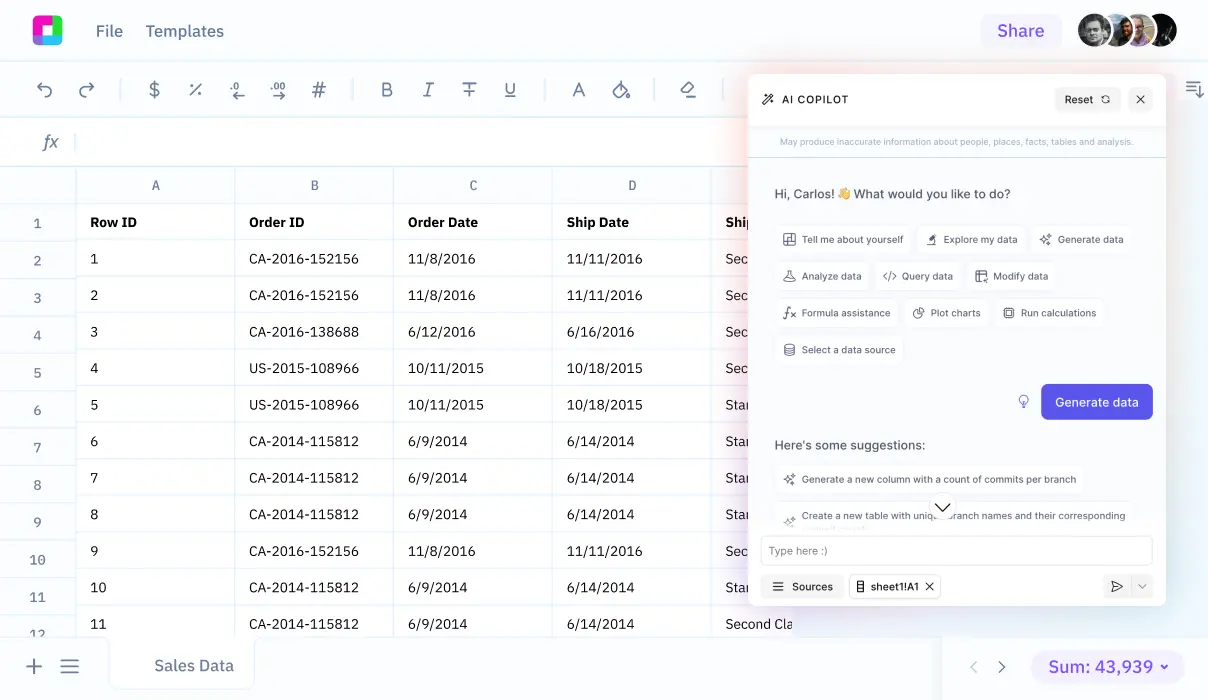

When working with data in the R programming language, it's essential to have a robust ETL (extract-transform-load) process to ensure that data is accurately and efficiently managed. Sourcetable offers a seamless solution for R users who need to load data into a spreadsheet-like interface without the complexities of using a third-party ETL tool or developing an in-house solution. By leveraging Sourcetable, users can sync live data from almost any application or database, automating the ETL process and enhancing their business intelligence capabilities.

One of the key benefits of using Sourcetable for your ETL needs is its ability to streamline data management tasks. Instead of juggling multiple tools or investing time and resources into building a custom ETL solution, Sourcetable simplifies the workflow by allowing you to automatically pull in data from various sources directly into an intuitive, spreadsheet-like environment. This not only saves time but also reduces the potential for errors that can occur during data transfer and transformation.

Furthermore, Sourcetable's focus on automation and a user-friendly interface makes it an excellent choice for those who are accustomed to the flexibility and familiarity of spreadsheets. This approach enables users to query and manipulate data with ease, fostering better decision-making and allowing for quick insights without the need for extensive technical expertise. In an era where agility and real-time analysis are paramount, Sourcetable provides an advantageous alternative to traditional ETL tools, delivering a powerful blend of simplicity and efficiency for your data processing requirements.

Common Use Cases

-

Use case 1: ETL for daily batch processing of on-premise data for analysisR

-

Use case 2: ETL operations by a team of R specialistsR

-

Use case 3: Creating and interacting with a SQL database using etl package for medium-sized dataR

-

Use case 4: Initializing and updating a SQL database for medium data without re-initializingR

-

Use case 5: Cleaning up a SQL database after ETL operations using etl packageR

Frequently Asked Questions

What are ETL tools used for in R programming language?

ETL tools for R are used for statistical analysis, data visualization, data manipulation, predictive modeling, and forecast analysis.

Can ETL tools for R handle large data sets?

Yes, some ETL tools for R are designed to handle large data sets, such as jwijffels/ETLUtils, which interfaces with R-based ff packages, and the ff package itself, which stores large data on disks with the speed and capacity of RAM.

How do ETL tools assist with data preprocessing in R?

ETL tools are useful for data preprocessing tasks such as loading .csv files, imputing missing data, and applying transformations necessary for further analysis or modeling.

Are there any ETL tools with a graphical user interface (GUI) for R?

Yes, Rattle is a popular GUI for data mining in R, which provides statistical and visual summaries, data transformation, model building, and model performance graphics.

What are some examples of ETL functions included in R?

ETL functions in R include cbind(), which adds a column to a data set; merge(), which merges two data sets; and date(), which adds the current date and time to a data set.

Conclusion

In summary, R programming language, renowned for its statistical computing and graphic design capabilities, is highly compatible with a variety of ETL tools that cater to different data needs. With tools ranging from open-source projects like Spark and Kettle, to specialized packages like beanumber/ETL for medium data, and commercial offerings like Panoply, Blendo, and Stitch, users have a plethora of options for streamlining their ETL processes. R's adaptability with ETL operations, particularly beneficial for managing wide and varied datasets, is further enhanced by R-based packages like dbplyr, dbi, and sparklyr, ensuring that all processes can be efficiently scheduled and managed within a unified environment. For those seeking an alternative to traditional ETL tools, Sourcetable presents an attractive option, enabling seamless ETL into spreadsheets. We invite you to sign up for Sourcetable and start simplifying your data operations today.