Export Hive to CSV

Introduction

Exporting data from Hive to CSV is a crucial process for data analysis, reporting, and sharing. This guide provides a step-by-step approach to efficiently extract your data from Hive.

We will cover the necessary commands, tools, and best practices to ensure a smooth export experience. Additionally, you'll learn about common challenges and how to troubleshoot them.

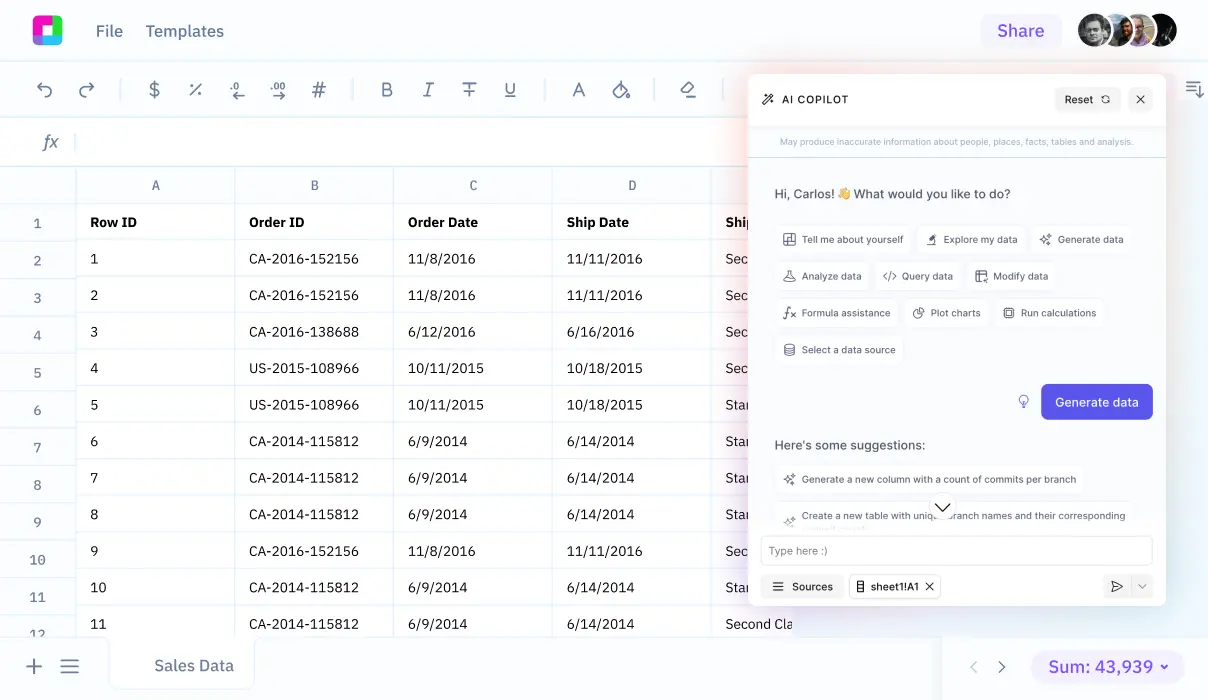

Finally, we will explore how Sourcetable lets you analyze your exported data with AI in a simple to use spreadsheet.

Exporting Data to CSV Format from Hive

Using the INSERT OVERWRITE LOCAL DIRECTORY Command

To export data from Hive to CSV, you can use the INSERT OVERWRITE LOCAL DIRECTORY command. This command allows you to specify a local directory where the CSV file will be saved. For example: INSERT OVERWRITE LOCAL DIRECTORY '/home/user/export' ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' SELECT * FROM table_name;.

Row Format Delimited Fields

To ensure that the data is in CSV format, set the row format to delimited with fields terminated by a comma. This is done using the ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' option in the INSERT OVERWRITE DIRECTORY command. This tells Hive to generate the output with commas separating each field.

Exporting Data to HDFS

If you need to export data to HDFS, use the INSERT OVERWRITE DIRECTORY command without the LOCAL keyword. For instance: INSERT OVERWRITE DIRECTORY '/user/hive/warehouse/output' ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' SELECT * FROM table_name;. This will save the output in HDFS rather than a local directory.

Including Column Headers

Column headers can be included in the CSV file by setting the Hive configuration property hive.cli.print.header=true. For example: hive -S -e "set hive.cli.print.header=true; SELECT * FROM table_name;" | sed 's/[\t]/,/g' > /home/user/export.csv. This will ensure that the first row of the CSV file contains the column headers.

Using Beeline for Export

Another way to export data to CSV is by using Beeline. Connect to Hive using Beeline and execute an export command like: beeline -u jdbc:hive2://hostname:10000 -n hive -e "INSERT OVERWRITE DIRECTORY '/tmp/export' ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' SELECT * FROM table_name". This saves the query results to HDFS.

Concatenating Files

When Hive exports data, it may split the data into multiple files. To concatenate these into a single CSV file, use the Hadoop fs -cat command. For example: hadoop fs -cat /tmp/export/* | hadoop fs -put - /tmp/export/final_export.csv. This combines all part files into one CSV file.

Using Command Line Tools

You can use command line tools like sed and perl to format Hive query output to CSV. For example: hive -e 'SELECT * FROM table_name' | sed 's/[[:space:]]\+/,/g' > /home/user/output.csv. This command replaces spaces with commas, converting the query result to CSV format.

How to Export Your Data to CSV Format from Hive

Exporting data from Hive to a CSV format can be done through various methods. Below, we outline the steps and commands necessary to perform this task efficiently.

Using Hive Command

To export a Hive table to a CSV file, use the INSERT OVERWRITE DIRECTORY command. This command writes the query results directly to a specified directory. To ensure the output is in CSV format, include the ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' clause. Example:

INSERT OVERWRITE DIRECTORY '/user/data/output/test' ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' SELECT column1, column2 FROM table1;

To export the CSV file to a local directory, add the LOCAL keyword:

INSERT OVERWRITE LOCAL DIRECTORY '/home/user/staging' ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' SELECT * FROM hugetable;

Handling Multiple Files

Exporting a large Hive table may result in multiple CSV files. To concatenate these into a single file, combine them on the client side using command-line tools. Example for HDFS:

hadoop fs -cat /tmp/data/output/* | hadoop fs -put - /tmp/data/output/combined.csv

Using Beeline CLI

Beeline CLI can also be used to export a Hive table to a CSV file. Example for exporting to HDFS:

beeline -u jdbc:hive2://my_hostname:10000 -n hive -e "INSERT OVERWRITE DIRECTORY '/tmp/data/my_exported_table' ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' SELECT * FROM my_exported_table;"

To concatenate and add a CSV header, use the following:

hadoop fs -cat /tmp/data/my_exported_table/* | hadoop fs -put - /tmp/data/my_exported_table/combined.csv

Using hive -e Command

The hive -e command is another method to export data directly to a CSV file. Example:

hive -e 'SELECT * FROM some_table' > /home/yourfile.csv

For custom delimiters including commas:

hive -e 'SELECT * FROM some_table' | sed 's/[

Additional Tips

For more customization, consider using a User-Defined Function (UDF) to handle special characters and complex data types. These methods ensure that your data is exported in the desired format, ready for analysis or sharing.

By following these steps, you can efficiently export your Hive data to CSV format, ensuring compatibility and ease of use for further data processing tasks.

Use Cases Unlocked by Hive

Data Warehousing

Apache Hive is ideal for building and managing a distributed data warehouse system. It enables efficient analytics at a massive scale by leveraging the robust capabilities of Apache Hadoop for storing and processing large datasets. Hive's SQL-like HiveQL interface allows users to read, write, and manage petabytes of data, making it accessible and user-friendly for data warehousing tasks.

Business Intelligence Applications

Hive enhances business intelligence by facilitating data extraction, analysis, and processing. Organizations can utilize Hive for complex OLAP applications and business intelligence analytics, allowing them to preprocess and transform data, leading to more informed decision-making. This capability makes Hive indispensable for businesses aiming to leverage their data for competitive advantage.

ETL Pipelines

Hive is an essential component in ETL (Extract, Transform, Load) pipelines. It effectively transforms and loads data, integrating seamlessly with Hadoop. Hive's HCatalog feature manages table and storage layers, ensuring smooth data handling across different stages of the ETL process. This makes Hive a critical tool for maintaining the data pipeline's efficiency and reliability.

Machine Learning Applications

In machine learning, Hive serves both as a preprocessing tool and an analysis platform. It prepares data for machine learning models, transforming raw data into structured formats suitable for training. Additionally, Hive can analyze and visualize machine learning models, offering insights into model performance and enabling further refinement.

Data Exploration and Discovery

Hive facilitates data exploration and discovery by providing a familiar SQL-like querying interface. It allows data scientists and analysts to efficiently query large datasets stored in Hadoop or Amazon S3. This capability makes Hive a valuable tool for uncovering insights and patterns within vast amounts of data, driving data-driven strategies and innovation.

Scalability and Performance

Designed for scalability, Hive efficiently handles petabytes of data using batch processing. Its distributed architecture and integration with Hadoop enable it to scale according to the needs of the organization. Hive's capability to quickly process large datasets makes it an essential tool for businesses dealing with big data challenges.

Storage and Process Management with HCatalog

HCatalog integrates Hive with other Hadoop components like Pig and MapReduce, streamlining data storage and management. This table and storage management layer reads from the Hive metastore, ensuring all data is accessible and organized. HCatalog enhances Hive's functionality, making it a versatile tool in the Hadoop ecosystem.

Why Sourcetable is an Alternative to Hive

Sourcetable stands out as a compelling alternative to Hive due to its user-friendly spreadsheet interface that simplifies data querying and manipulation. With Sourcetable, you can effortlessly collect data from various sources into a single, unified platform.

This real-time data integration allows you to instantly access and analyze data without the need for complex SQL queries. Sourcetable's intuitive design is tailored for users who prefer the simplicity of spreadsheets over traditional database management systems.

Unlike Hive, which requires a deeper understanding of Hadoop and query languages, Sourcetable enables users to perform data operations in a familiar, spreadsheet-like environment. This lowers the barrier to entry and makes data handling accessible to a broader audience.

Moreover, Sourcetable’s flexibility in data manipulation provides an efficient and streamlined approach, helping teams make quicker, data-driven decisions. This makes it an ideal tool for businesses looking to enhance productivity and data accessibility.

Frequently Asked Questions

How do I export a Hive table to a CSV file?

Use the INSERT OVERWRITE DIRECTORY command with the ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' option. You can use the LOCAL keyword to save the CSV file to the local file system. For example: 'insert overwrite local directory '/home/user/staging' row format delimited fields terminated by ',' select * from my_table;'

What Hive version is required to use the --outputformat=csv2 option?

The --outputformat=csv2 option requires Hive version 3.1 or higher.

How can I concatenate multiple CSV files in Hive?

You can use the cat command to concatenate multiple CSV files. For example: 'cat file1 file2 > file.csv'. You can also use 'hadoop fs -cat' to concatenate files in the HDFS directory into a single CSV file.

Can I export specific columns from a Hive table to CSV?

Yes, you can use a SELECT statement to specify which columns to include in the CSV. For example: 'insert overwrite local directory '/home/user/staging' row format delimited fields terminated by ',' select column1, column2 from my_table;'

How do I handle different delimiters when exporting from Hive to CSV?

You can use bash scripts or the sed command to handle different delimiters. For example: 'hive -e 'select * from some_table' | sed 's/[

Conclusion

Exporting data from Hive to CSV is an essential skill for anyone working with large datasets. Following the steps outlined ensures a smooth and error-free transfer of your data.

Now that you have successfully exported your data, it is ready for further analysis. To take your data analysis to the next level, consider using Sourcetable.

Sign up for Sourcetable to analyze your exported CSV data with AI in a simple-to-use spreadsheet.