Export Databricks to CSV

Introduction

Exporting data from Databricks to a CSV file is a common task for data analysts and scientists.

This guide demonstrates the steps required to achieve this export with ease and accuracy.

Additionally, we will explore how Sourcetable lets you analyze your exported data with AI in a simple-to-use spreadsheet.

Exporting Data to CSV from Databricks

Manual CSV Export

Databricks allows users to manually download data in CSV format directly from a notebook cell. This is a straightforward method for exporting small datasets that do not require automation.

Using Databricks API to Export to S3

Databricks offers the ability to write data to an Amazon S3 bucket in CSV format using its API. After the data is stored in S3, users can download the CSV file from the S3 bucket. This method is suitable for handling larger datasets and automating the export process.

Saving DataFrame as CSV Using Commands

To save a DataFrame as a CSV file in Databricks, you can use specific commands. For instance, the command df.coalesce(1).write.format("com.databricks.spark.csv").option("header", "true").save("dbfs:/FileStore/df/df.csv") saves a DataFrame as a CSV file in Databricks filesystem, accessible via the Databricks GUI.

Alternatively, the command df.write.format("com.databricks.spark.csv").save("file:///home/yphani/datacsv") can be used to save a DataFrame as a CSV file in a specific directory on a Unix server.

Overview of Export Processes

There are three main ways to export data from Databricks to CSV: manual download from a notebook cell, using the Databricks API to write to S3, and saving DataFrames as CSV using specific commands. Each method suits different needs, ranging from simple, ad-hoc exports to automated, scalable solutions.

How to Export Your Data to CSV Format from Databricks

Manual Download from a Notebook Cell

You can manually download data in CSV format from a Databricks notebook cell. This straightforward method allows you to quickly export data without the need for complex configurations.

Saving Dataframes as CSV

To save a dataframe as a CSV in Databricks, use the command df.write.format("com.databricks.spark.csv").save("filename.csv"). For downloading, save the dataframe to the file store with the command df.coalesce(1).write.format("com.databricks.spark.csv").option("header", "true").save("dbfs:/FileStore/df/df.csv"). The CSV file will be available for download from the FileStore.

Exporting Data to S3

You can also write data to an S3 bucket in CSV format using the Databricks API. This method is useful for integrating with other services and programs that use S3 for storage.

Using the Databricks API

Data can be exported from Databricks to CSV by running a Databricks notebook inside a workflow using the API. This method supports advanced automation and scheduling for regular exports.

Asynchronous API Calls Using Lambda Functions

An efficient way to manage API calls for exporting data is to use a lambda function. This allows for asynchronous execution, improving performance and reliability.

Use Cases Unlocked by Knowing Databricks

Predictive Analytics in Retail

Databricks enables retailers to leverage predictive analytics for demand forecasting, inventory management, and sales optimization. By utilizing Databricks' unified data management tools and in-memory analytics capabilities through Spark, retailers can analyze large datasets quickly and accurately, improving decision-making and customer satisfaction.

Fraud Detection in Finance

Financial institutions use Databricks to detect and prevent fraud more efficiently. With advanced threat detection and real-time data processing, Databricks allows for the rapid identification of suspicious activities, enhancing security measures and protecting sensitive financial information.

Personalized Healthcare

Healthcare providers can use Databricks to deliver personalized care plans by analyzing patient data. The platform's support for both structured and unstructured data helps in creating comprehensive health profiles, leading to better patient outcomes and more targeted treatments.

Optimizing Energy Production and Distribution

Databricks helps energy companies optimize production and distribution by analyzing large volumes of sensor data and other information. This leads to more efficient energy usage, cost savings, and reduced environmental impact.

Enhancing Customer Experience in E-Commerce

With Databricks, e-commerce platforms can enhance customer experiences through personalized recommendations and targeted marketing. The platform's data science and machine learning tools allow for the development of advanced recommendation systems and analytics-driven customer insights.

Streamlining Supply Chain Management

Databricks simplifies supply chain management by enabling real-time analytics and predictive modeling. Companies can better predict demand, manage inventory, and optimize logistics, resulting in increased efficiency and reduced operational costs.

Improving Cybersecurity with Advanced Threat Detection

Organizations use Databricks to improve cybersecurity by leveraging its advanced threat detection capabilities. The platform's ability to quickly analyze new data sources helps in identifying potential threats and mitigating risks proactively.

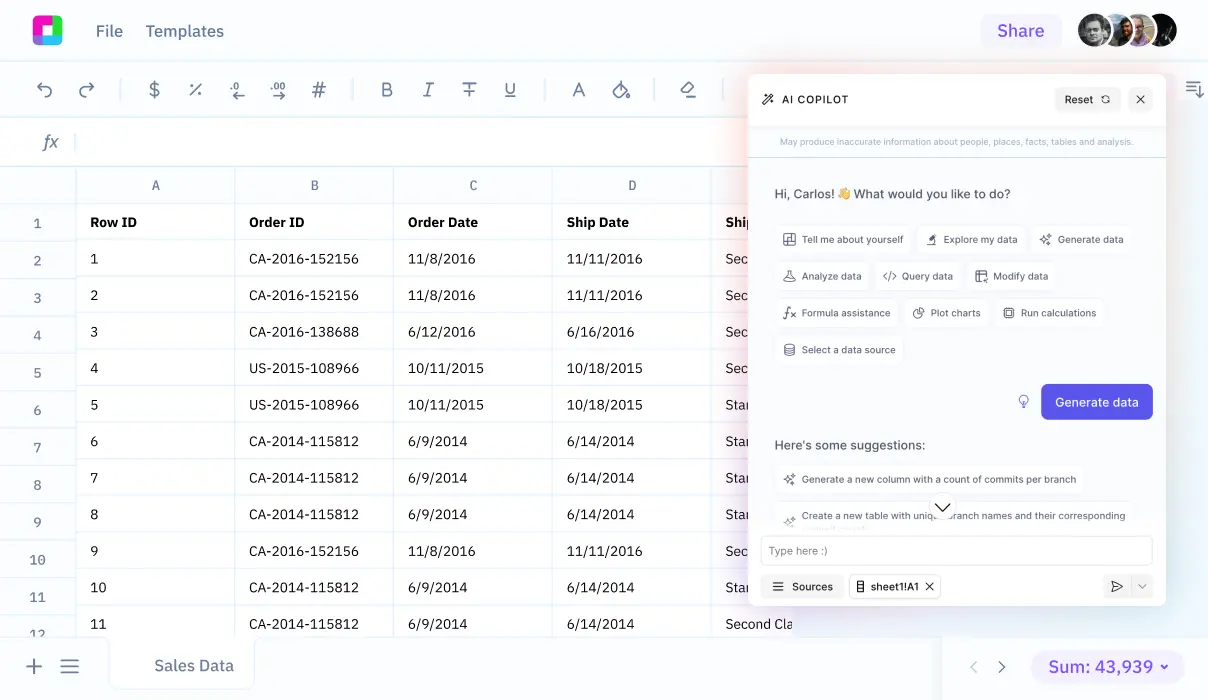

Why Choose Sourcetable Over Databricks?

Sourcetable is a spreadsheet that aggregates all your data in one place from various data sources. It provides a unified interface to view and interact with your data.

With Sourcetable, querying data is simplified. You can extract real-time information directly from your databases without needing complex configurations or data pipelines.

The spreadsheet-like interface in Sourcetable allows for intuitive data manipulation. Users can execute powerful queries and analyses without extensive training or coding knowledge.

Sourcetable excels in accessibility. Its familiar interface ensures that both technical and non-technical team members can collaborate effectively on data tasks.

Frequently Asked Questions

How can I manually download data from a Databricks notebook as a CSV?

You can manually download data in CSV format directly from a Databricks notebook cell to your local machine.

How can I export data from Databricks to a CSV using an API?

You can write data to an S3 bucket in CSV format using the Databricks API. Once the data is in the S3 bucket, you can download the CSV file from there.

Can I use a workflow to export data from Databricks to CSV?

Yes, you can run a Databricks notebook in a workflow using an API, which will write the data to an S3 bucket in CSV format, and the API will return the S3 location. You can then download the file from the S3 bucket.

Is it possible to pass downloaded CSV files from Databricks to other applications?

Yes, after downloading the CSV file from a Databricks notebook to your local machine, you can pass the file to another application as needed.

Conclusion

Exporting data from Databricks to CSV is a straightforward process that ensures your data remains easily accessible and shareable. By following the steps outlined, you can seamlessly convert your data into a widely-used format.

Efficient data handling is crucial for any data-driven project. Once your CSV file is ready, you can leverage advanced tools to gain deeper insights.

Sign up for Sourcetable to analyze your exported CSV data with AI in a simple-to-use spreadsheet.